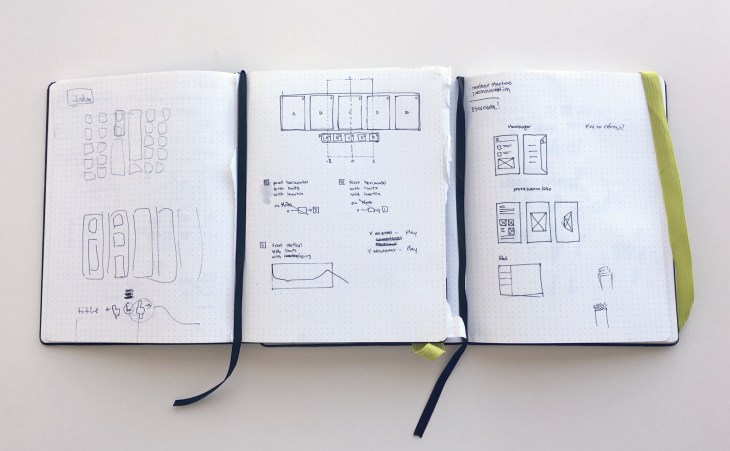

A wireframe is a visual representation or a mockup of an interface using only simple shapes. They’re void of any design elements such as colors, fonts or images and they’re used to communicate ideas and represent the layout of a website in the early stages of a project.

They are usually done before the design phase commencement to get the business approval on the structure of the design itself.

It is important that:

- Elements on the page have a good aspect ratio for the content they contain.

- The white-space should give elements room to breathe, and should never be so large that connected elements get lost.

- If the more than one header is shown, the headers adding relevant information should be large, whilst others should be either small (e.g. where the header is mostly implied by the content) or omitted (where the header is completely implied by the content).

- Vertical space is used wisely.

- As a rule of thumb, multi-line text and headers that repeat down the page should be left justified. Lone lines can be centered. With tabular data and forms, the left column can be right justified.

- To Avoid:

- Extraneous lines & ‘chartjunk’

- Unnecessarily repeated elements on the same page.

- Inconsistent layout choices.

- Information of minimal relevance to common tasks.